In the Future, Everyone’s an Architect (And Why That’s a Good Thing) Part 1

by Eric J. Cesal

Designer, Educator, Writer, and noted Post-disaster Expert

April 26, 2023

Eric Cesal boldly explores the architect’s role over time and the future impacts of artificial intelligence on practice.

Editor’s Note: In an exploration of this Quarter’s theme, Contextual Awareness, DesignIntelligence offers an intriguing two part series, including a mind-blowing video by author Eric Cesal. Read the second installment in Part 2.

ARCHITECTS IN TIME

When I was first invited to contribute on the theme of contextual awareness, there didn’t seem to be anything to talk about except time. Having practiced architecture all over the world, I appreciate how important it is to be aware of one’s context. However, those experiences taught me that knowing when you are is at least as important as knowing where you are. The “when” dimension is also the one we architects always seem to get wrong.

Architecture is always lagging. We’ve lagged in adopting new technologies - embracing reinforced concrete technology half a century after engineers did, and embracing CAD/CAM technology decades after the aerospace industry pioneered it. We trail our peers in medicine and law in achieving diversity. Many architecture schools still rigidly adhere to a 20th century instruction model, which was meant to simulate a 19th century practice model, which we attempt to remedy by interjecting 21st century technology into the studio.

Something is ‘out of time’ about architects. Maybe that’s because there is something fundamentally timeless about architecture (good architecture, anyhow). Perhaps because our work is evaluated over decades and centuries, we move through time at a different pace than doctors, or lawyers or engineers.

An architect’s core function is as a translator, one who mediates between client desires and the public trust, and that hasn’t changed much in centuries. Architects translate their clients’ desires and intentions into built form. It’s a task that requires extensive, expert knowledge of myriad technical fields, and general knowledge of many other fields. Done well, it requires empathy - the kind that allows you to intuit a client’s spoken and unspoken intentions.

It also requires an ability to translate those intentions into multiple dialects: the architect must re-articulate those intentions in the languages of the contractor, the code official, the review board, et al. She must also be able to represent those intentions in multiple non-verbal communication forms including sketches, construction documents, specifications, 3D building information models and dozens of others.

It seems improbable this fundamental role would change, seeing how it has withstood all the technological and sociological shifts to date. However, my background in disaster reconstruction cautions me against this kind of “so far, so good”’ thinking. Things are only ever in stasis until provoked out of stasis, usually because of some cataclysm, black swan event, or technological revolution. Indeed, architecture was born of such a revolution.

I maintain that the profession of architecture owes its existence to a particular technological revolution: the elevator safety brake. This invention kicked off a global technological arms race among engineers to make elevators faster, safer, and more accessible. In the process, they made tall buildings practical for everyday human use. The progress of elevator technology inspired a similar technological push in building science. As cities pushed skyward, their growth furthered the case that specialized, licensed professionals were necessary to protect the public’s safety in the ancient, but newly complex endeavor of designing and building buildings.

IMAGINING FUTURE PRACTICE

What might the future of practice hold? Taking a page from my friends at The Long Now Foundation, I began to imagine the present as a midpoint on a long continuum. In this case, stretching backwards to the professionalizing of architecture in 1897, and extending into the future another 125 years, to understand how an architect might understand their own temporal context today. But looking that far into the future can get a bit fuzzy. In lieu of idle daydreaming, I took a science-fiction prototyping (SFP) approach, blended with a McKinsey 3 Horizons approach to look for the seeds of an architectural future, here in the present.

Let’s lay the foundation. Science Fiction Prototyping is a technique first introduced by Brian David Johnson, then a futurist working at Intel, which aimed to imagine the future without getting lost in the messy business of forecasting. The technique principally involves creating stories about the future by extrapolating current trends in research and innovation. By grounding the affair in storytelling, the future is given structure (assuming your story has structure). The invention of the whomajigger was necessarily preceded by the invention of the whatchamacalit, and so forth. Plausibility is what distinguishes good science fiction from the rest. We can suspend disbelief because it seems like a future that could happen. And by auguring towards good science fiction, one augers towards a plausible version of the future.

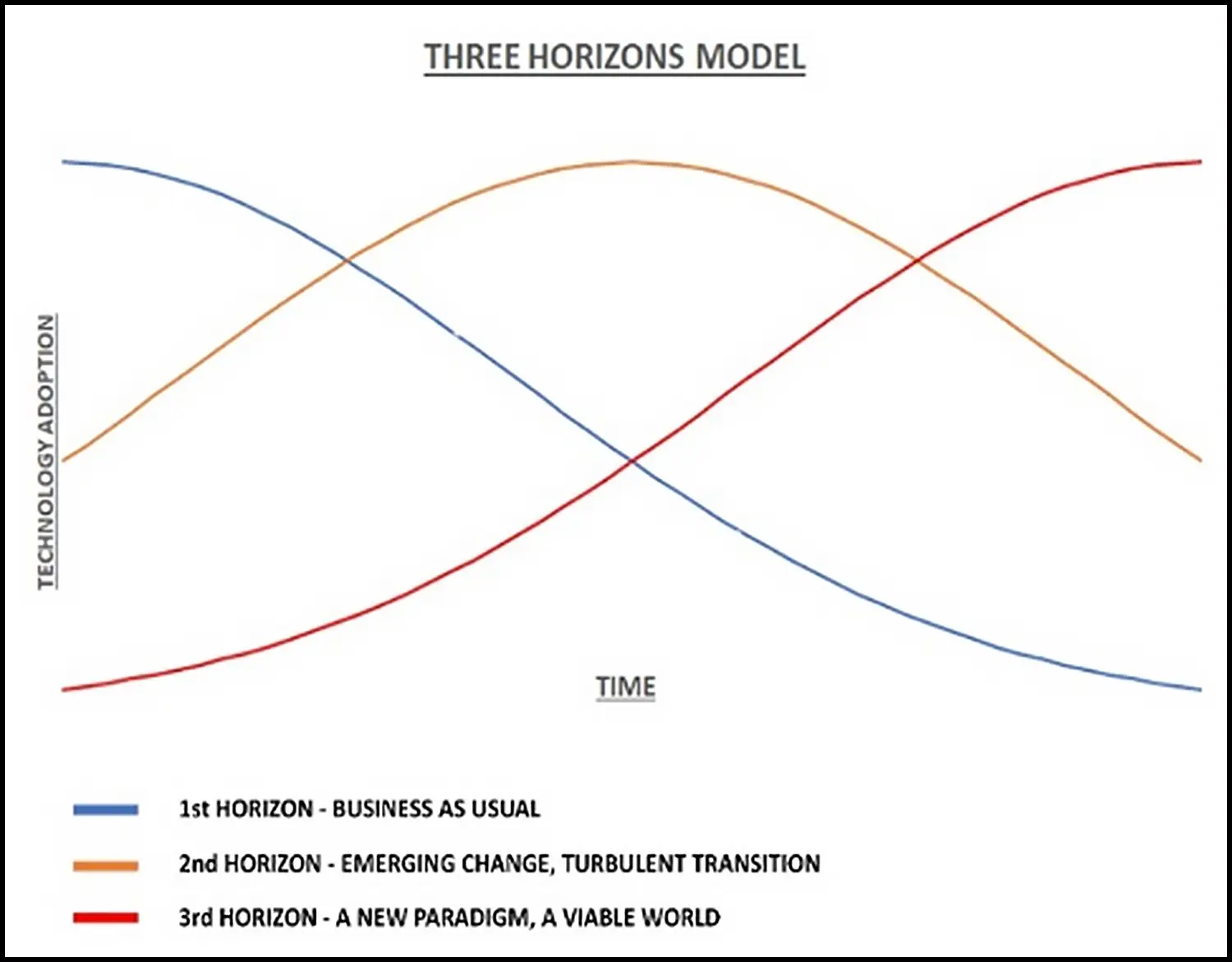

McKinsey’s 3 Horizons model is a similar device. It also believes that seeds of the future are perceptible in the present. In other words, the future is already being invented, it may just not look like anything remarkable just yet. Assuming they want to stay relevant, an executive’s role is to balance the maintenance of the 1st horizon, navigate the 2nd horizon, and anticipate the 3rd horizon.

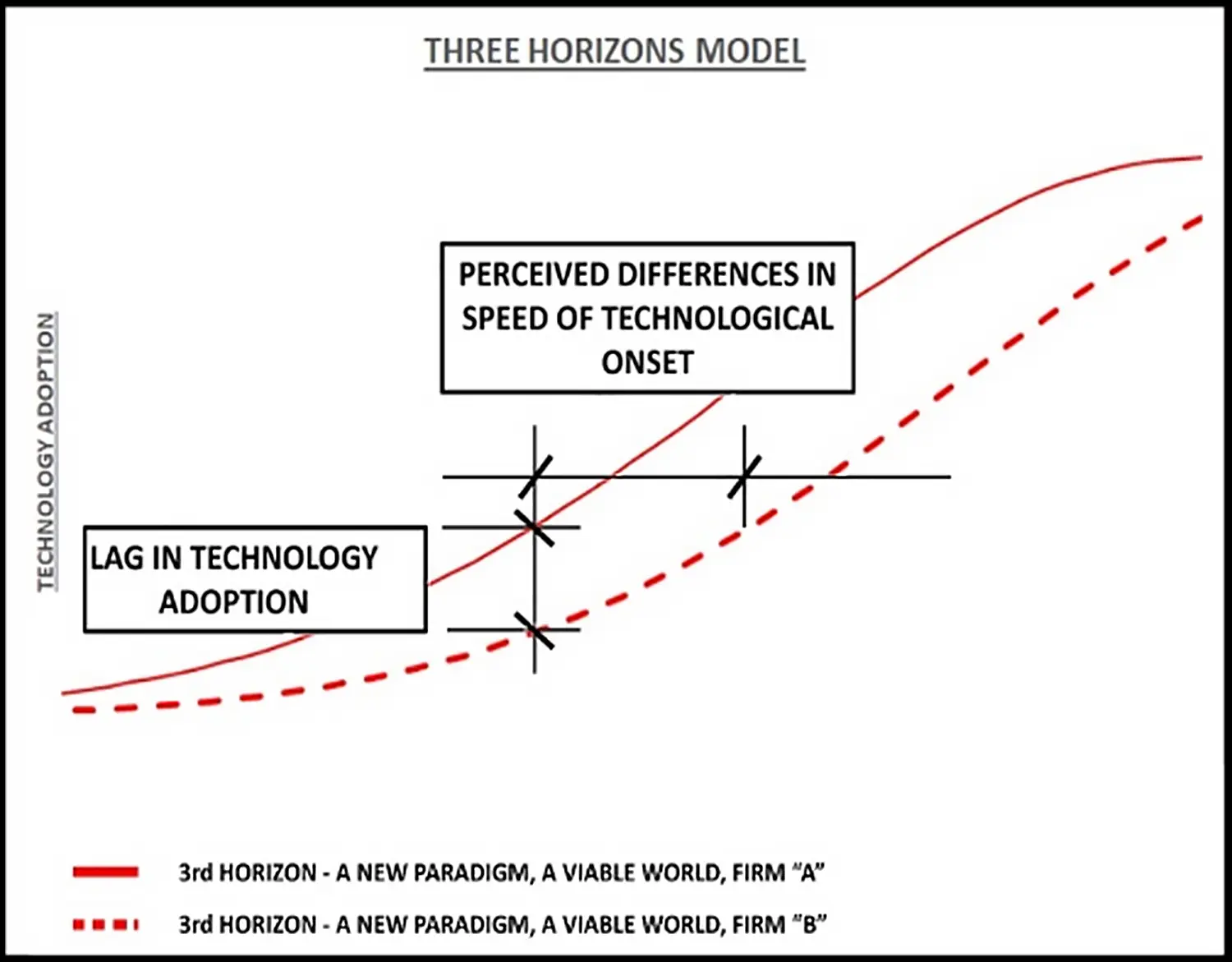

A weakness of the 3 Horizons Model is that it depends on an individual executive’s subjective perception of the future, and how fast it’s

approaching. That’s overcome by baselining one future perception against another. In Figure 2, we see two understandings of the

future. Firm A understands the distribution of the 3rd Horizon curve as much tighter. To Firm A, the future is approaching faster, which

will, of course, inform their plans to adapt to it. Firm B (shown dashed) may understand the exact same future - the same technology, the

same social changes, etc. - but perceive it as approaching

more slowly.

Firm B will therefore likely have a different approach to the future, due to embracing it with less urgency. At any point in time, Firm B is behind Firm A in its technological adoption and preparation for the future (y-axis), because it perceives the onset of technology as being farther out in the future (x-axis).

Figure 1: The Three Horizons Model, after McKinsey and Co.

Figure 2: Two Understandings of the Future.

HOW FAST IS THE FUTURE APPROACHING?

Just how fast is the future approaching? The recent flurry of interest over Natural Language, Generative AIs (NLGAI)1 like Chat GPT and Stable Diffusion seems to have ignited another round of wild speculation and claims that the robot takeover is around the corner. Futurist Chicken Littles have been saying that since at least the Industrial Revolution, and yet there are more people (and more architects) than ever, vs. a relatively scant few robots. Our most advanced robots still struggle with things easily mastered by five-year-olds. As reassuring as I find that observation, I’ve seen The Terminator more than once, and I’m perpetually mindful of architecture’s sluggish history where technology is concerned.

To resolve this conundrum, I turned to an SFP approach, to generate a story about the future practice of architecture. I sought one grounded in today’s technology, while benchmarking the present as a midpoint in the long continuum of architectural practice.

Survey the recent cacophony around NLGAI and architecture, and you’ll find a good deal of the kind of “special pleading” identified by Richard Susskind in his 2016 best-seller “The Future of the Professions: How Technology Will Transform the Work of Human Experts.” In it, Susskind asserts:

“They [professionals] accept that the professions in general are in need of change, but they maintain that their own particular fields are immune. Exploiting the asymmetry of knowledge, we are told that you don’t understand. This claim tends to be followed by a list of characteristics of their work that make change inappropriate.”

In a hypothetical architecture firm, Lang, Shelley & Associates (LSA)2 you might hear this response:

‘Of course technology is going to change work, but it will mostly automate [the parts of the job I already dislike] and [someone else’s job]. It can’t threaten an architect’s core work, because architecture requires creativity and empathy, which computers cannot emulate.’

Perhaps LSA has a point. Many readers have already incorporated some forms of artificial intelligence (AI)3 into their practices, and the need for human talent is still high. But within the confines of AI’s current use, it doesn’t challenge an architect’s fundamental role as translator, because clients still need architects to facilitate the translation from intention, through complicated software, around byzantine building codes, over technical challenges, and into the built environment. Moreover, all computer programs, no matter how intelligent, are still bound by the GIGO Law (“Garbage In, Garbage Out”). Without knowledgeable architects to provide the right input to any generative algorithm, its output is worse than useless, it’s dangerous.

I’m not here to argue whether architecture does or doesn’t require [blank]. I’ll only point out that Susskind’s ‘special pleading’ above is the death rattle of every profession that has ever fallen under the wheel of technology. Professions who believe they can be replaced usually take steps to avoid it, while professions that myopically think they can’t be replaced (e.g., elevator operators) usually end up getting replaced precisely because they took no steps to moderate technology’s advance.

But that’s not us, right? Right?

THE BLIND SPOTS: TWO ASSUMPTIONS

Last year, in a lecture on the future of design practice, I opined to a student audience that the biggest mistake architects make when thinking about the future is assuming that they will be a part of it. This cognitive bias is dangerous when applied to any technological innovation; it allows one to consider: “How will I use this new technology to augment my services?” and avoid the more depressing questions: “Will my services even be required?” and “Will this technology replace my services?”

In surveying the popular and academic literature around AI and architecture, there seems to be a consensus that these new AI-driven technologies will be rapidly integrated into the architect’s toolbox, nestled betwixt Grasshopper and some neglected drafting dots, assuming we obey the authors’ injunctions to get in front of the technological curve, and start shaping these technologies to our own collective benefit. Besides, the only thing AI has done so far is given us a whole new set of sophisticated design tools that make designing easier, faster, more creative, and less error prone. Sounds like a false alarm!

This engenders two assumptions. If not faulty, they are certainly worthy of inspection. It’s assumed that new AI-driven technologies will spawn tools which:

- Will be tools of the architect and not someone else.

- Can be integrated into practice in a fashion and at a speed that makes a meaningful (and positive) change in the architect’s work and life.

Assumption 1: New AI-driven Design Tools will be Tools for Architects

I had a nettle in my brain when I began this article: I had already read a few of the more popular books and articles on the emergence of AI in architecture. I recall thinking at the time ‘I wonder why they assume these technologies are built for, or will be in the hands of, architects.’ To invalidate my suspicion, I began this piece by consuming a wide smattering of articles on the prospects of AI in architecture. Wherever an author had the courage to confront the naked question ‘will AI replace architects,’ they landed on the same assumption. Even in the most rigorous academic papers, when the subject turned to whether AI would replace architects, the conclusion was a breezy, a priori ‘Doesn’t seem likely’ or ‘that wouldn’t be good.’ These kinds of answers seemed oddly dismissive, given the existential nature of the question.

In their essay Artificial Intelligence for Human Design in Architecture, Renaud Danhaive and Caitlin Mueller of MIT’S Digital Structures Lab write

“Indeed, in recent years some have proposed AI-driven platforms that generate architectural artifacts, such as floorplans or facades. However, when completely isolated from human designers, such aspirations may be missing the point: the human experience of the built environment, arguably the most critical component of architecture, will always be best understood by a human designer.4”

Similarly, Carl Christiansen (Co-Founder and CTO of Spacemaker AI) opines:

“But most importantly, to be adopted, workflows enabled by the AI would need to be attractive and compatible with the creative process of design. At its core, this process is both incremental and iterative in nature. A designer wants to interact with and augment a proposed design, and stakeholders want to have their say. Compromises must be made. An AI that creates “finished’’ design proposals by taking in information and turning it into designs, is neither iterative nor incremental in nature. Rather than augmenting the process, it replaces the process, becoming a competitor to the designer, not a complement.5”

In “Machine Learning: Architecture in the Age of Artificial Intelligence,” Phil Bernstein writes:

“. . . refining and implementing those decisions [on preferred design scenarios] will remain far beyond the reach of their [computers] capabilities, and human architects will always make the final determinations of what is best.6”

And adds more explicitly:

“Notably absent from this list, save perhaps the last item, are systems tasked with generating entire design solutions (at any scale) for a project. A central thesis of this book is that such systems will not be useful until far in the future - if at all. They are unlikely to provide useful insights and present an unnecessary existential threat to architects.7”

Even Chat GPT Agrees! When I asked it whether AI was going to replace architects, it replied:

“It is unlikely that AI will completely replace architects in the near future. While AI and other advanced technologies are playing an increasingly important role in the design and construction industries, there are certain aspects of the architect’s job that require human skills and expertise.”

In response to this slate of conclusions, my question is why? Why assume that these tools are being developed for architects, or that architects will be their eventual users? Architects are the logical users of such tools, for now, in the same way that elevator operators were the logical operators of elevators, for a brief time, even after the safety elevator was invented. But as the tools grow in power, sophistication, and importantly, user-friendliness, why wouldn’t they just become tools of the client?

This core assumption - that the tools will be tools of architects, enables many other assumptions embedded in the cited passages above. When Danhaive & Mueller opine that “the human experience of the built environment. . . will always be best understood by a human designer,” by what evidence are we drawing that conclusion?

When Christiansen writes “Rather than augmenting the process, it replaces the process, becoming a competitor to the designer, not a complement” he implies that would be a bad thing. And it would, for architects. But others (real estate developers?) might consider it a good thing, worthy of capital investment and invention.

Bernstein lands lightly on what is probably the ultimate reason for the ubiquitous 1st assumption: “the creation of a design generator capable of even simple buildings is likely to have unintended and unpleasant consequences for the profession.”8

It would be bad for architects.

The invention of the safety elevator changed civilization and enabled the modern city. In its nascence, the safety elevator protected the

lives of elevator operators, too, but that’s not whom it was for. No subsequent technological development of the elevator favored the

operator, either. The elevator operator of old had several important, technological job requirements.

He had to:

- regulate the elevator speed - fast enough so that passengers wouldn’t get impatient, but not so fast that passengers were made uncomfortable.

- regulate the acceleration of the elevator in similar ways.

- precisely calibrate both so that the elevator stopped in perfect level with the building floor level, so that riders wouldn’t trip on their way out.

- open and close the doors only after he had judged the elevator to be in a safe, stable position.

- respond to calls from various floors, to make sure all passengers in both vertical directions weren’t being asked to wait too long for a ride.

One by one, technological innovations eliminated these technical components of the elevator operator’s role:

In 1909, when the Singer Building opened in New York City, it was the first to have an ‘elevator supervisor,’ who monitored elevator calls and controlled and directed departures from a central location. Elevator operators were no longer the pilots of their own vessels.

In 1924, Otis installed the first automatic signal controller, dramatically curtailing the role of both the elevator operator and the elevator supervisor.

In 1929, the Haughton Elevator and Machine Co patented the first solid, automatic elevator door (until then elevators used manually operated gates, with obvious safety implications). The remaining safety responsibilities of the elevator operator were obviated. The elevator rider was then positioned to do everything that had been done by the elevator operator.

Such has been the general narrative of all technological advance: it eliminates professions by allowing someone who was previously the user to become the operator.

Assumption 2: New AI-driven Design Tools can be Integrated in Time

The second assumption is suspect because it presumes these technologies can be absorbed into an architect’s practice at a pace meaningful to architects, clients, and the world at large. We are all struggling to keep up as it is, and the debut of new technologies will only accelerate going forward. The ‘pace layer’ of technology inherently moves independently from our ability to absorb it - personally and into our practices.9

The two move not only at different speeds, but at different accelerations. No one can absorb new tools into their toolbox faster than the time required to learn to use them. And if new design tools are being generated faster than architecture’s learning curve, it seems unlikely that society will shelve such tools merely to keep architects gainfully employed. Given enough time, elevator operators may have learned some way to co-exist with, and add their own value to, the safety elevator. But elevators evolved quickly: from menacing industrial deathtraps to ubiquitous interior features within a single human lifetime.

If these two assumptions prove faulty, does that consign an architect’s role to the history books? To say we’ll never be replaced by technology is naive. At the other extreme, to say we’ll be imminently replaced is incendiary and reckless.

The McKinsey 3 Horizons approach offers a calm strategy for finding a middle ground. The key is to delineate between the 2nd and 3rd horizons - to methodically parse which technologies architects must grapple with here in the present, and which technologies we should keep a wary eye on for the future.

I arrived at a conclusion familiar to anyone who’s studied the issue: the easiest parts of an architect’s role to automate (and therefore the most at risk) were the ones farther down the design cycle. Bernstein provides a useful taxonomy, identifying some skills as requiring ‘perceptive’ knowledge (the most demanding - skills that are inherently creative, subjective and reliant on implicit knowledge) and others as requiring ‘integrative’ knowledge (the 2nd most demanding - skills that require an intelligent integration of procedural tasks to reach a measurable goal).

Areas of ‘perceptive’ knowledge would include:

- Analyzing and Understanding the Brief

- Generating Alternatives

- Evaluating and Selecting Alternatives

Areas of ‘integrative to perceptive’ knowledge would include:

- Getting, Assigning, Managing Staffing

- Managing Practice Operations

- Assigning and Coordinating Work

- Meeting, Managing Clients/Decisions

- Coordinating with Regulators

- Interfacing with Public/Communities

The only task areas that lie entirely within the ‘perceptive’ category are “Analyzing and Understanding the Brief” “Generating Alternatives” and “Evaluating and Selecting Alternatives,” suggesting they are the hardest to automate, and will be the last to fall under the wheel of advancing technology.

Interestingly, the three skills noted above would all fall under the general heading of ‘translations.’ To analyze and understand a brief, one must translate from spoken or verbal intent into a spatialized concept that expresses that intent. To generate alternatives, one must translate that spatialized concept into plans, sketches, and other information that can be evaluated by others. And to evaluate and select alternatives, one must translate in the other direction - taking visual and spatial representations and translating them back into the language of the client, to make sure the translation has been conducted faithfully.

How could all that possibly be imitated by a machine?

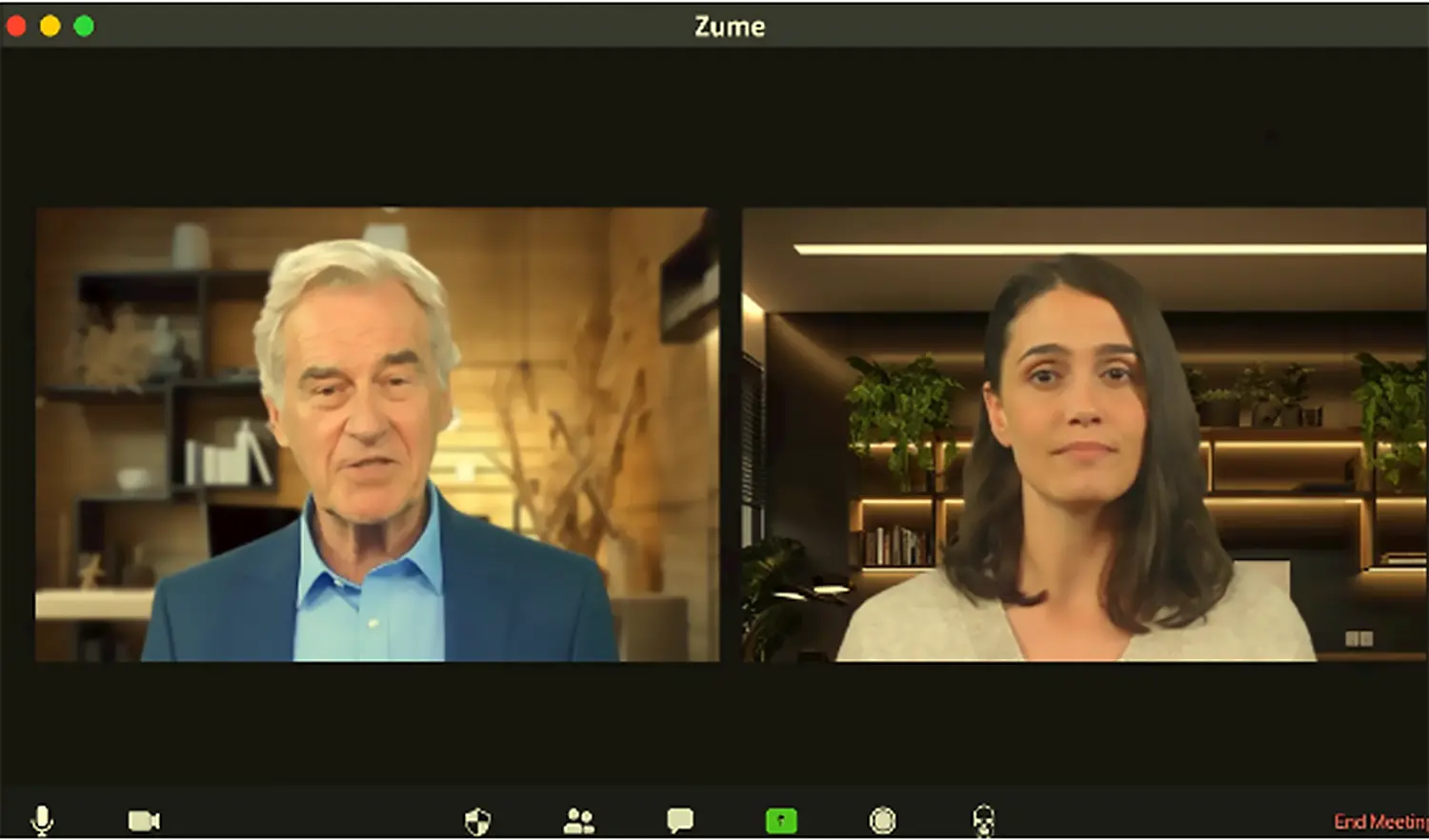

AN AI EXPERIMENT

As it turns out, it was quite easy. I tried it myself in the form of this NLGAI-generated, hypothetical interaction between a client, her architect and his design team. An AI Architect, an AI Client, an AI Engineer, and an AI Contractor have plenty to talk about, apparently. Be forewarned, the video is 24 minutes long, but it should only take you a few minutes to understand the implications. All content in the video is AI generated, including the dialogue, the designs, the budgets, etc. See for yourself, and tune in for Part 2 for a further discussion on how the interaction was made, it’s implications for practice, and thoughts on the future:

An AI-Generated Video Scenario: A CLIENT, her ARCHITECT, his ENGINEER, and their CONTRACTOR

[Click to Play the Video]

Part 2 of this series is now available. For those interested in an advance peek at how the video was made, we invite you to check out the technical addendum, available now.

Eric J. Cesal is a designer, educator, writer and noted post-disaster expert, having led on-the-ground reconstruction programs after the Haiti earthquake, the Great East Japan Tsunami and Superstorm Sandy. Cesal’s formal training is as an architect, with international development, economics and foreign policy among his areas of expertise. Cesal has been called “Architecture’s First Responder” by The Daily Beast for his work leading Architecture for Humanity’s post-disaster programs from 2010 to 2014. He has been interviewed widely on his work by publications such as The New Yorker, Architectural Record, Architect Magazine, Foreign Policy Magazine and Monocle.

Cesal is the co-founder of Design for Adaptation, a strategic planning consultancy that combines strategic foresight and adaptation strategies to help clients design more prosperous futures. Cesal is also widely known for his book, “Down Detour Road, An Architect in Search of Practice” (MIT Press, 2010), which sought to connect architecture’s chronic economic misfortunes with its failure to prioritize urgent social issues. He has taught at several of the world’s leading design schools, including Washington University in St. Louis and most recently at the College of Design at UC Berkeley. There, he concurrently served as the director of sustainable environmental design. He is currently developing a new course for Harvard’s Global Development Practice program called “Community-Based Responses to Disaster” to debut in the summer of 2023.

Cesal holds a B.A. in Architectural Studies from Brown University, as well as advanced degrees in architecture, construction management, and an MBA from Washington University in St. Louis.

FOOTNOTES:

1Includes, but isn’t limited to, Chat GPT, Midjourney, DALL-E, Stable Diffusion. Any program that allows a layperson to generate

written or visual content without coding.

2Lang, Shelley and Associates, a fictitious firm homage to Fritz Lang and Mary Shelley, two artists who tried to warn us about

technology.

3Here, we take AI to mean all forms of artificial intelligence, including weak, strong, and general, as well as all forms of

machine learning as well.

4Chaillou, S. (2022). Artificial Intelligence and Architecture: From Research to Practice. Birkhäuser., pp 129

5Chaillou, S. (2022). Artificial Intelligence and Architecture: From Research to Practice. Birkhäuser., pp 165

6Bernstein, P. (2022). Machine Learning: Architecture in the Age of Artificial Intelligence. RIBA Publishing., pp. 165

7Bernstein, P. (2022). Machine Learning: Architecture in the Age of Artificial Intelligence. RIBA Publishing., pp. 118

8Bernstein, P. (2022). Machine Learning : Architecture in the Age of Artificial Intelligence. RIBA Publishing., pp. 118

9 ‘Pace Layers’ is a concept popularized by futurist Stewart Brand in his book How Buildings Learn: What Happens After They’re

Built (Brand, 1994), which was based on the concept of ‘shearing layers’ developed by architect Frank Duffy, former president of RIBA.

Duffy’s original concept saw a building as a set of components that occupy different timescales: Shell (30-50 years), Services (15 years),

Scenery (5 years), and Set (every few weeks or months).

Brand expanded this concept to Site (Eternal), Structure (30-300 years), Skin (20 years), Services (15 years), Space Plan (3 years) and

Stuff (Constant). He subsequently expanded the thinking beyond buildings, to the scale of civilization, and organized civilization around

Nature, Culture, Governance, Infrastructure, Commerce, and Fashion.

Here, we mean that the pace of technological innovation isn’t dependent on the pace of learning how to use them. The former is driven by

culture, governance, infrastructure, etc. The latter is constrained by the human brain’s biological limits, and professional culture. This

allows technological innovation to grow faster than our ability to understand or use it, under certain circumstances.